When uploading data into systems, comma separated value format (CSV) is a common choice. A difficulty can arise when editing CSV files in Excel — date formats can easily (and accidentally) change causing upload failures and end-user frustration. It is possible to write CSV uploading routines to automatically detect date formats and alleviate this problem in many cases.

When uploading data into systems, comma separated value format (CSV) is a common choice. A difficulty can arise when editing CSV files in Excel — date formats can easily (and accidentally) change causing upload failures and end-user frustration. It is possible to write CSV uploading routines to automatically detect date formats and alleviate this problem in many cases.

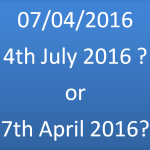

In finance, much of the data in CSV files has (local) date columns, for example a swap trades file would usually have effective date and termination date columns. These date columns can become problematic when a user wants to alter the data using Excel; its natural behaviour is to ‘helpfully’ format the dates according to the user’s language and region. So, for example, 4th July 2016 might appear as 2016-07-04 in the CSV file but could be formatted as 04/07/2016 in the U.K. and 07/04/2016 in the U.S. When the user saves the edited CSV files, the formatting changes are also saved, and the CSV upload to the system can fail.

The frustration caused to users was eloquently captured by one user,

“even a blind man on a galloping horse can see the date format in this file, why can’t the system!”.

When discussed with engineers who have written the CSV uploading routines, a common response is that date format can be ambiguous and so cannot be automatically detected; for example, 04/07/2016 could mean 4th July or 7th April. The engineer is of course completely correct, dates are ambiguous in isolation. However, step back and think what a human does when they open the file and inspect the data, they scan all the dates in the first few rows, find one or more unambiguous dates and then imply the data format from that. If we can mimic that behaviour, we improve our chances of correctly importing the data. Of course, there is still a chance that every date in the file is ambiguous, but what is the chance of that?

High school probability can help us answer that question. Let us consider a simple and ideal case, a CSV file with one date column, and each row of data independent of the other rows, and for the sake of argument assume the year has 4 digits (so 2016 as opposed to just 16). Consider the first row — only 12 days out of 28-31 days are ambiguous, so the chance of an ambiguous date on the first row is less than 50% (we could be sharper on this bound but it doesn’t help much). Then consider the first and second rows; as we have assumed the data in the rows to be independent, the chance of both the first and second rows containing ambiguous dates is less than 25%. More generally,

all things being equal, the chance of the first n rows all containing ambiguous dates is less than 1 in 2n.

This is great news, consider n=10; that is, if we only read the first 10 rows, the chance of all the dates being ambiguous is less than 1 in 1024, or 0.1%. Thus, with only reading a few lines of data we can be very confident of detecting an unambiguous date and therefore determine the date format. There is an overhead to this pre-processing of the data and discovering the format, but for files which consist of 100’s if not 1000’s of rows, it is not worth worrying about given the benefits.

Of course, there will be valid cases contrary to our ideal assumptions above; for example, a trades file with a single ‘what-if’ trade, or a yield curve consisting only of key yearly rates. There is nothing we can do about those cases. However, enough practical cases arise to justify this automatic detection of the date format, it really helps lower the error rates in the system and reduce support calls.

We have put much effort in the discussion above concerning CSV files as it is quite popular and our system supports that format in the UI and the REST API. It is worth noting that if one simply supports the upload of Excel’s own format, .xlsx or .xls, the date formatting issue is avoided completely as the date values are stored as numbers (similar to a Julian day number) and there is no ambiguity whatsoever.